本页面介绍了用于在驱动程序与框架之间高效传递运算数缓冲区的数据结构和方法。

在模型编译过程中,框架会为驱动程序提供常量运算数的值。常量运算数的值要么位于 HIDL 矢量中,要么位于共享内存池中,具体取决于它的生命周期。

- 如果生命周期为

CONSTANT_COPY,则值位于模型结构的operandValues字段中。由于 HIDL 矢量中的值是在进程间通信 (IPC) 期间复制的,因此此位置通常只用于保存少量数据,例如标量运算数(如ADD中的激活标量)和小型张量参数(如RESHAPE中的形状张量)。 - 如果生命周期为

CONSTANT_REFERENCE,则值位于模型结构的pools字段中。在 IPC 期间,系统只会复制共享内存池的句柄,而不会复制原始值。因此,与使用 HIDL 矢量相比,使用共享内存池保存大量数据(如卷积中的权重参数)更为高效。

在模型执行过程中,框架会为驱动程序提供输入运算数和输出运算数的缓冲区。与可能在 HIDL 矢量中发送的编译时常量不同,执行的输入和输出数据始终通过一组内存池进行通信。

HIDL 数据类型 hidl_memory 在编译和执行中都用来表示一个未映射的共享内存池。驱动程序应该根据 hidl_memory 数据类型的名称对内存进行相应的映射,使其可以使用。支持的内存名称有:

ashmem:Android 共享内存。如需了解详情,请参阅内存。mmap_fd:由文件描述符通过mmap支持的共享内存。hardware_buffer_blob:由 AHardwareBuffer 支持且采用AHARDWARE_BUFFER_FORMAT_BLOB格式的共享内存。在神经网络 (NN) HAL 1.2 中提供。如需了解详情,请参阅 AHardwareBuffer。hardware_buffer:由常规 AHardwareBuffer 支持且不采用AHARDWARE_BUFFER_FORMAT_BLOB格式的共享内存。这种非 BLOB 模式硬件缓冲区只能在模型执行过程中使用。在 NN HAL 1.2 中提供。如需了解详情,请参阅 AHardwareBuffer。

从 NN HAL 1.3 开始,NN API 支持为驱动程序管理的缓冲区提供分配器接口的内存域。驱动程序管理的缓冲区也可以用作执行输入或输出。如需了解详情,请参阅内存域。

NNAPI 驱动程序必须支持 ashmem 和 mmap_fd 内存名称的映射。从 NN HAL 1.3 开始,驱动程序还必须支持 hardware_buffer_blob 的映射。对常规非 BLOB 模式 hardware_buffer 和内存域的支持是可选的。

AHardwareBuffer

AHardwareBuffer 是一种封装 Gralloc 缓冲区的共享内存。在 Android 10 中,Neural Networks API (NNAPI) 支持使用 AHardwareBuffer,因此允许驱动程序在不复制数据的情况下完成执行,从而提升应用的性能并降低功耗。例如,相机 HAL 堆栈可以使用由相机 NDK API 和媒体 NDK API 生成的 AHardwareBuffer 句柄,将 AHardwareBuffer 对象传递给 NNAPI 以处理机器学习工作负载。如需了解详情,请参阅 ANeuralNetworksMemory_createFromAHardwareBuffer。

NNAPI 中使用的 AHardwareBuffer 对象通过名为 hardware_buffer 或 hardware_buffer_blob 的 hidl_memory 结构传递给驱动程序。hidl_memory 结构 hardware_buffer_blob 仅表示具有 AHARDWAREBUFFER_FORMAT_BLOB 格式的 AHardwareBuffer 对象。

框架所需的信息在 hidl_memory 结构的 hidl_handle 字段中编码。hidl_handle 字段会封装 native_handle,后者会对与 AHardwareBuffer 或 Gralloc 缓冲区有关的所有必需元数据进行编码。

驱动程序必须对提供的 hidl_handle 字段进行正确解码,并访问 hidl_handle 所描述的内存。当调用 getSupportedOperations_1_2、getSupportedOperations_1_1 或 getSupportedOperations 方法时,驱动程序应检测它是否能够对提供的 hidl_handle 进行解码并访问 hidl_handle 所描述的内存。如果不支持用于常量运算数的 hidl_handle 字段,模型准备必定会失败。如果不支持用于执行的输入或输出运算数的 hidl_handle 字段,执行必定会失败。如果模型准备或执行失败,建议驱动程序返回 GENERAL_FAILURE 错误代码。

内存域

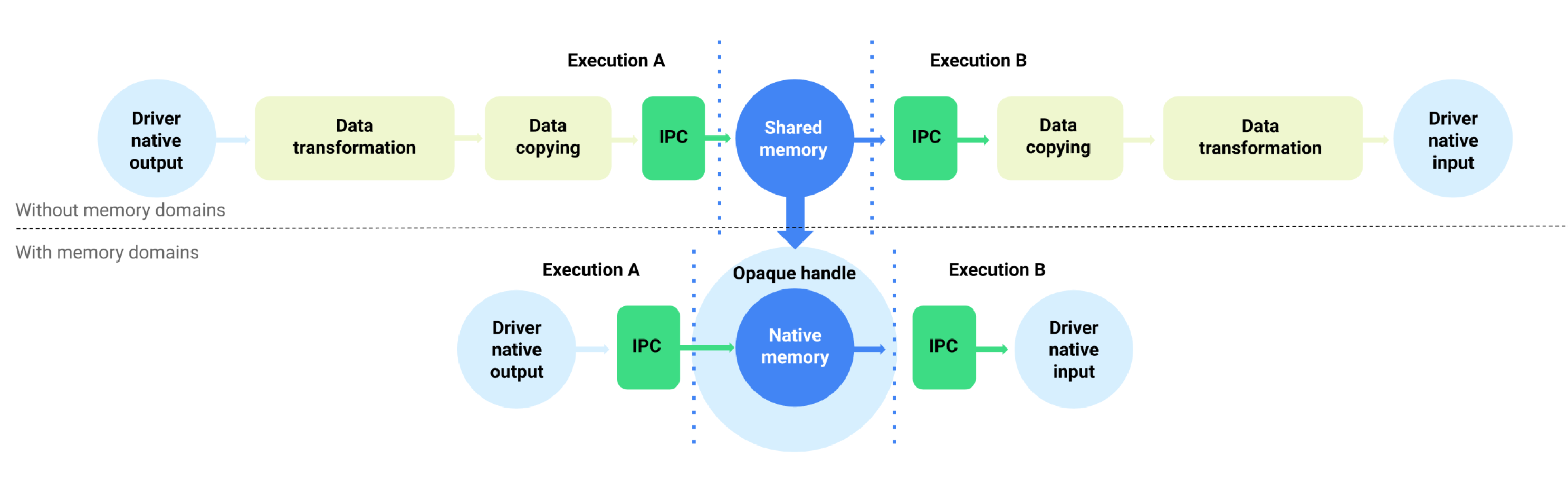

对于搭载 Android 11 或更高版本的设备,NNAPI 支持为驱动程序管理的缓冲区提供分配器接口的内存域。这样可以跨执行传递设备原生内存,从而抑制在同一驱动程序的连续执行之间进行不必要的数据复制和转换。相应的数据流如图 1 所示。

图 1. 使用内存域的缓冲区数据流

内存域功能适用于主要在驱动程序内部使用且无需频繁访问客户端的张量。此类张量的示例包括序列模型中的状态张量。对于需要在客户端上频繁访问 CPU 的张量,建议使用共享内存池。

如需支持内存域功能,请实现 IDevice::allocate,以允许框架请求驱动程序管理的缓冲区分配。在分配期间,框架提供了缓冲区的以下属性和使用模式:

BufferDesc用于描述缓冲区的必需属性。BufferRole用于描述如下潜在使用模式:将缓冲区用作准备好的模型的输入或输出。您可以在缓冲区分配期间指定多个角色,已分配的缓冲区只能用作这些指定角色。

已分配的缓冲区仅限驱动程序内部使用。驱动程序可以选择任何缓冲区位置或数据布局。成功分配缓冲区后,驱动程序的客户端可以使用返回的令牌或 IBuffer 对象来引用缓冲区或与之交互。

当在执行的 Request 结构中将缓冲区作为 MemoryPool 对象之一引用时,会提供 IDevice::allocate 的令牌。为防止进程尝试访问另一进程中分配的缓冲区,驱动程序必须在每次使用缓冲区时应用适当的验证。驱动程序必须验证缓冲区的用法是否属于分配期间提供的某个 BufferRole 角色;如果用法不当,必须立即使执行失败。

IBuffer 对象用于执行显式内存复制。在某些情况下,驱动程序的客户端必须从共享内存池中初始化驱动程序管理的缓冲区,或者将缓冲区复制到共享内存池中。使用情形示例包括:

- 状态张量的初始化

- 缓存中间结果

- 对 CPU 执行回退操作

为了支持这些使用情形,驱动程序必须使用 ashmem、mmap_fd 和 hardware_buffer_blob 实现 IBuffer::copyTo

以及 IBuffer::copyFrom(如果支持内存域分配的话)。驱动程序对非 BLOB 模式 hardware_buffer 的支持是可选的。

在缓冲区分配期间,可从 BufferRole 指定的所有角色的相应模型运算数和 BufferDesc 中提供的维度推导出缓冲区的维度。将所有维度信息相结合,缓冲区可能具有未知的维度或秩。在这种情况下,缓冲区在作为模型输入时处于灵活状态,其维度是固定的;在作为模型输出时则处于动态状态。同一缓冲区可在不同的执行中使用不同形状的输出,并且驱动程序必须正确处理缓冲区的大小调整。

内存域是一项可选功能。驱动程序可以根据多种原因确定无法支持指定的分配请求。例如:

- 所请求的缓冲区具有动态大小。

- 驱动程序具有内存限制,导致无法处理大型缓冲区。

多个不同的线程有可能同时从驱动程序管理的缓冲区中读取内容。系统未定义为执行写操作或读/写操作而同时访问缓冲区的行为,但不能无限期地导致驱动程序服务崩溃或阻塞调用方。驱动程序可以返回错误或让缓冲区的内容处于不确定状态。