Android 12 中引入了兼容的媒体转码功能,使设备能够使用更加新式、存储效率更高的媒体格式(例如 HEVC)拍摄视频,同时又保持与应用的兼容性。借助此功能,设备制造商可以默认使用 HEVC 而不是 AVC,从而改善视频质量,同时降低存储空间和带宽要求。如果设备启用了兼容的媒体转码功能,当以 HEVC 或 HDR 等格式录制的视频(时长不超过一分钟)由不支持该格式的应用打开时,Android 可以自动转换视频的格式。这样一来,即使设备上的视频是以较新的格式拍摄的,应用也能正常发挥作用。

默认情况下,兼容的媒体转码功能处于关闭状态。如需请求进行媒体转码,应用必须声明其媒体功能。如需详细了解如何声明媒体功能,请参阅 Android 开发者网站上的兼容的媒体转码。

工作原理

兼容的媒体转码功能包括两个主要部分:

- 媒体框架中的转码服务:这些服务使用硬件将文件从一种格式转换为另一种格式,实现延迟低、质量高的转换。此部分包括转码 API、转码服务、用于自定义过滤器的 OEM 插件以及硬件。如需更多详细信息,请参阅架构概览。

- 媒体提供程序中兼容的媒体转码功能:媒体提供程序中的此组件用于拦截访问媒体文件的应用,并根据应用声明的功能提供原始文件或经过转码的文件。如果应用支持媒体文件的格式,就无需进行特殊处理。如果应用不支持该格式,那么当应用访问文件时,框架会将文件转换为 AVC 等旧格式。

图 1 显示了媒体转码的概要流程。

图 1. 兼容的媒体转码概览。

支持的格式

兼容的媒体转码功能支持以下格式转换:

- HEVC(8 位)转换为 AVC:通过连接一个 mediacodec 解码器和一个 mediacode 编码器来执行编解码器转换。

- HDR10+(10 位)转换为 AVC (SDR):使用 mediacodec 实例和一个连接到解码器实例的供应商插件执行从 HDR 到 SDR 的转换。如需了解详情,请参阅从 HDR 编码为 SDR。

支持的内容来源

兼容的媒体转码功能支持设备上满足以下条件的媒体内容:由原生 OEM 相机应用生成,并且存储在主要外部卷中的 DCIM/Camera/ 文件夹中。该功能不支持辅助存储设备中的媒体内容,也不支持通过电子邮件或 SD 卡传递给设备的内容。

应用根据不同的文件路径访问文件。下面介绍了支持转码或绕过转码的文件路径:

支持转码:

- 应用通过 MediaStore API 访问文件

- 应用通过直接文件路径 API(包括 Java 和原生代码)访问文件

- 应用通过存储访问框架 (SAF) 访问文件

- 应用通过操作系统分享表单 intent 访问文件。(仅限 MediaStore URI)

- 将 MTP/PTP 文件从手机传输到 PC

绕过转码:

- 通过弹出 SD 卡从设备向外传输文件

- 使用“附近分享”或“蓝牙传输”等选项在设备之间传输文件。

为转码功能添加自定义文件路径

设备制造商可以选择为媒体转码添加 DCIM/ 目录下的文件路径。DCIM/ 目录以外的任何路径都会被拒绝。根据运营商要求或当地法规,有可能必须添加此类文件路径。

如需添加文件路径,请使用转码路径运行时资源叠加层 (RRO) config_supported_transcoding_relative_paths。以下示例展示了如何添加文件路径:

<string-array name="config_supported_transcoding_relative_paths" translatable="false">

<item>DCIM/JCF/</item>

</string-array>

如需验证已配置的文件路径,请使用以下命令:

adb shell dumpsys activity provider com.google.android.providers.media.module/com.android.providers.media.MediaProvider | head -n 20架构概览

本部分将介绍媒体转码功能的架构。

图 2. 媒体转码架构。

媒体转码架构包括以下组件:

- MediaTranscodingManager 系统 API:可以让客户端与 MediaTranscoding 服务进行通信的接口。MediaProvider 模块使用此 API。

- MediaTranscodingService:用于为

TranscodingSessions管理客户端连接、安排转码请求并管理簿记的原生服务。 - MediaTranscoder:用于执行转码的原生库。此库基于媒体框架 NDK 构建而成,以便与模块兼容。

兼容的媒体转码功能会记录服务和媒体转码器中的转码指标。客户端和服务端代码位于 MediaProvider 模块中,可用于及时修复 bug 和进行更新。

文件访问

兼容的媒体转码功能构建于用户空间中的文件系统 (FUSE) 之上,这是用于分区存储的文件系统。借助 FUSE,MediaProvider 模块可以检查用户空间中的文件操作,并根据允许、拒绝或隐去访问权限的政策来限制对文件的访问。

当应用尝试访问文件时,FUSE 守护程序会拦截应用对文件的读取访问。如果应用支持新格式(例如 HEVC),就会返回原始文件。如果应用不支持该格式,系统会将文件转码为旧格式(例如 AVC),或者在有经过转码的版本可用时返回缓存中的文件。

请求经过转码的文件

默认情况下,兼容的媒体转码功能处于停用状态,这意味着如果设备支持 HEVC,除非应用在清单文件中指定转码或在强制转码列表中列出相应文件,否则 Android 不会对文件进行转码。

应用可以使用以下选项请求经过转码的资源:

- 在清单文件中声明不支持的格式。如需了解详情,请参阅在资源中声明功能和在代码中声明功能。

- 将应用添加到包含在 MediaProvider 模块中的强制转码列表中。这样就能针对尚未更新清单文件的应用进行转码。一旦应用更新了清单文件中的不支持格式,就必须将其从强制转码列表中移除。设备制造商通过提交补丁程序或报告 bug,可以指定将其应用添加到强制转码列表中或从中移除其应用。Android 团队会定期审核该列表,并且可能会从该列表中移除应用。

- 在运行时通过应用兼容性框架停用支持的格式(用户也可以在“设置”中针对每个应用停用此功能)。

- 使用

MediaStore打开一个文件,同时使用openTypedAssetFileDescriptorAPI 明确指定不支持的格式。

对于 USB 传输(从设备到 PC),转码功能在默认情况下处于停用状态,但用户可以选择使用 USB 偏好设置设置屏幕中的将视频转换成 AVC 格式切换开关来启用转码功能,如图 3 所示。

图 3. 在“USB 偏好设置”屏幕中通过切换启用媒体转码功能。

有关请求经过转码的文件的限制

为防止转码请求长时间锁定系统资源,我们对请求转码会话的应用做了如下限制:

- 10 次连续会话

- 总播放时长为三分钟

如果应用超出这些限制,框架就会返回原始文件描述符。

设备要求

如需支持兼容的媒体转码功能,设备必须满足以下要求:

- 设备在原生相机应用中默认启用 HEVC 编码

- (支持从 HDR 转码为 SDR 的设备)设备支持拍摄 HDR 视频

为了确保用于媒体转码的设备性能,必须优化视频硬件和存储空间读写权限性能。当媒体编解码器配置的优先级等于 1 时,编解码器必须以尽可能高的吞吐量运行。我们建议,转码性能至少要达到 200 fps。如需测试硬件性能,请运行位于 frameworks/av/media/libmediatranscoding/transcoder/benchmark 的媒体转码器基准测试。

验证

如需验证兼容的媒体转码功能,请运行以下 CTS 测试:

android.media.mediatranscoding.ctsandroid.mediaprovidertranscode.cts

全局启用媒体转码功能

如需通过转码来测试媒体转码框架或应用行为,可以全局启用或停用兼容的媒体转码功能。在设置 > 系统 > 开发者 > 媒体转码开发者选项页面中,将覆盖转码默认设置切换开关设为开启,然后将启用转码切换开关设置为开启或关闭。如果启用了此设置,系统可能会在后台针对除您正在开发的应用以外的其他应用进行媒体转码。

检查转码状态

在测试期间,您可以使用以下 ADB shell 命令检查转码状态,包括当前和过去的转码会话:

adb shell dumpsys media.transcoding延长视频时长限制

出于测试目的,可以使用以下命令延长针对转码的一分钟视频时长限制。运行此命令后可能需要重新启动。

adb shell device_config put storage_native_boot transcode_max_duration_ms <LARGE_NUMBER_IN_MS>AOSP 源代码和参考文档

以下是与兼容的媒体转码功能相关的 AOSP 源代码。

转码系统 API(仅供 MediaProvider 使用)

ApplicationMediaCapabilities API

frameworks/base/apex/media/framework/java/android/media/ApplicationMediaCapabilities.javaMediaTranscoding 服务

frameworks/av/services/mediatranscoding/frameworks/av/media/libmediatranscoding/

原生 MediaTranscoder

frameworks/av/media/libmediatranscoding/transcoder

MediaTranscoder 的 HDR 示例插件

MediaProvider 文件拦截和转码代码

MediaTranscoder 基准测试

frameworks/av/media/libmediatranscoding/transcoder/benchmark

CTS 测试

cts/tests/tests/mediatranscoding/

从 HDR 编码为 SDR

如需支持从 HDR 编码为 SDR,设备制造商可以使用位于 /platform/frameworks/av/media/codec2/hidl/plugin/ 中的 AOSP 示例 Codec 2.0 过滤器插件。本部分将介绍该过滤器插件的工作原理,以及如何实现该插件和如何测试该插件。

如果设备不包含支持从 HDR 编码为 SDR 功能的插件,则无论清单中声明的应用媒体功能如何,访问 HDR 视频的应用都会获取原始文件描述符。

工作原理

本部分将介绍 Codec 2.0 过滤器插件的一般行为。

背景

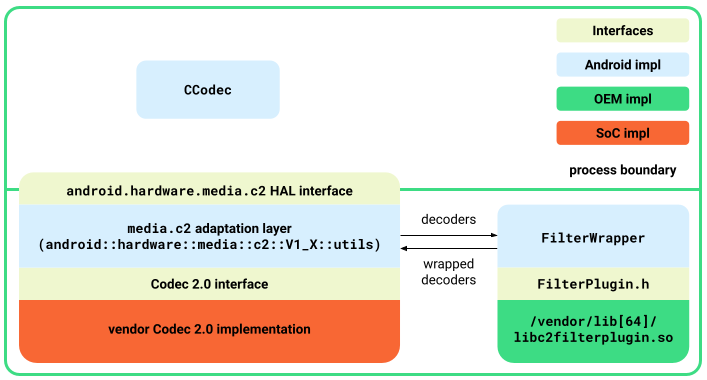

Android 在 Codec 2.0 接口与位于 android::hardware::media::c2 的 android.hardware.media.c2 HAL 接口之间提供了一个适配层实现。对于过滤器插件,AOSP 中包含一种封装容器机制,用于将解码器与过滤器插件封装在一起。MediaCodec 将这些封装的组件视为具有过滤功能的解码器。

概览

FilterWrapper 类接受供应商编解码器,并将封装的编解码器返回到 media.c2 适配层。FilterWrapper 类通过 FilterWrapper::Plugin API 加载 libc2filterplugin.so,并记录来自插件的可用过滤器。在创建时,FilterWrapper 会实例化所有可用过滤器。在启动时,它只会启动那些更改缓冲区的过滤器。

图 4. 过滤器插件架构。

过滤器插件接口

FilterPlugin.h 接口定义了以下 API 来公开过滤器:

std::shared_ptr<C2ComponentStore>getComponentStore()返回一个包含过滤器的

C2ComponentStore对象。此存储区与供应商的 Codec 2.0 实现所公开的存储区分开。通常,此存储区仅包含FilterWrapper类使用的过滤器。bool describe(C2String name, Descriptor *desc)描述除通过

C2ComponentStore提供的过滤器之外的过滤器。定义了以下描述:controlParam:用于控制过滤器行为的参数。例如,对于从 HDR 映射到 SDR 的色调映射器,控制参数是目标传输函数。affectedParams:受过滤操作影响的参数。例如,对于从 HDR 映射到 SDR 的色调映射器,受影响的参数是颜色的各方面参数。

bool isFilteringEnabled(const std::shared_ptr<C2ComponentInterface> &intf)如果过滤器组件会更改缓冲区,就返回

true。例如,如果目标传输函数是 SDR,输入传输函数是 HDR(HLG 或 PQ),色调映射过滤器就会返回true。

FilterWrapper 详细信息

本部分将介绍 FilterWrapper 类的详细信息。

创建

封装的组件会在创建时实例化底层解码器和所有已定义的过滤器。

查询和配置

封装的组件根据过滤器描述将传入参数与查询或配置请求分开。例如,过滤器控制参数的配置将被路由到相应的过滤器,而来自过滤器的受影响参数则存在于查询中(而不是从包含未受影响参数的解码器中读取)。

图 5. 查询和配置。

启动

在启动时,封装的组件会启动解码器和所有更改缓冲区的过滤器。如果未启用任何过滤器,封装的组件就会启动解码器和直通缓冲区,并向解码器本身发送命令。

缓冲区处理

图 6. 缓冲区处理。

排队等待封装的解码器处理的缓冲区会转到底层解码器。封装的组件通过 onWorkDone_nb() 回调从解码器获取输出缓冲区,然后将其加入等待过滤器处理的队列。来自最后一个过滤器的最终输出结果将报告给客户端。

为了让这种缓冲区处理正常发挥作用,封装的组件必须将 C2PortBlockPoolsTuning 配置为最后一个过滤器,以便框架从预期的块池输出缓冲区。

停止、重置和释放

停止时,封装的组件会停止解码器以及启动的所有已启用过滤器。在重置和释放时,所有组件无论是否已启用,都会被重置或释放。

实现示例过滤器插件

如需启用此插件,请执行以下操作:

- 在某个库中实现

FilterPlugin接口并将其拖放到/vendor/lib[64]/libc2filterplugin.so. - 根据需要为

mediacodec.te添加其他权限。 - 将适配层更新为 Android 12 并重新构建

media.c2服务。

测试插件

如需测试示例插件,请执行以下操作:

- 重新构建并刷写设备。

使用以下命令构建示例插件:

m sample-codec2-filter-plugin重新装载设备并重命名供应商插件,使编解码器服务能够识别它。

adb root adb remount adb reboot adb wait-for-device adb root adb remount adb push /out/target/<...>/lib64/sample-codec2-filter-plugin.so \ /vendor/lib64/libc2filterplugin.so adb push /out/target/<...>/lib/sample-codec2-filter-plugin.so \ /vendor/lib/libc2filterplugin.so adb reboot