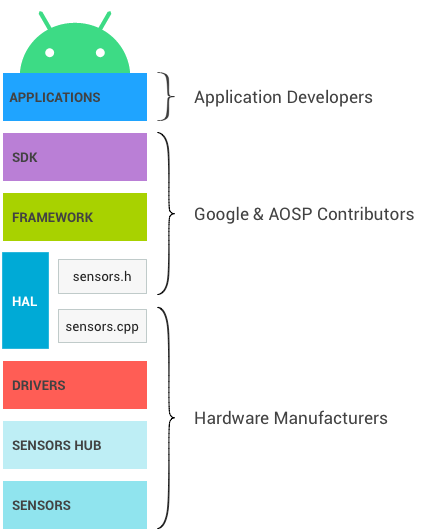

下图显示了 Android 传感器堆栈。各个组件仅可与其上方和下方紧邻的组件通信,不过某些传感器可以绕过传感器中枢(如果存在)进行通信。控制信号从应用向下流向传感器,数据从传感器向上流向应用。

图 1. Android 传感器堆栈层级以及各自的所有者

SDK

应用通过 Sensors SDK(软件开发套件)API 访问传感器。SDK 包含用以列出可用传感器和注册到传感器的函数。

在注册到传感器时,应用可指定自己的首选采样率和延迟要求。

- 例如,应用可注册到默认加速度计,以 100Hz 的频率请求事件,并允许事件报告有 1 秒延迟。

- 应用将以至少 100Hz 的频率从加速度计接收事件,且最多会延迟 1 秒。

请参阅开发者文档,详细了解 SDK。

框架

框架负责将多个应用关联到 HAL。HAL 本身是单一客户端。如果框架级别没有发生这种多路复用,在任何指定时间内每个传感器都只能被一个应用访问。

- 当第一个应用注册到传感器时,框架会向 HAL 发送请求以激活传感器。

- 当其他应用注册到相同的传感器时,框架会考虑每个应用的要求,并将更新的已请求参数发送到 HAL。

- 当注册到某个传感器的最后一个应用取消注册之后,框架会向 HAL 发送请求以停用该传感器,从而避免不必要的功耗。

多路复用的影响

在框架中实现多路复用层的这一需求说明了一些设计决策的原因。

- 当应用请求特定采样率时,不能保证事件不会以更快的频率到达。如果另一个应用以更快的频率请求同一传感器,第一个应用也将以那个快的频率来接收事件。

- 请求的最大报告延迟同样无法得到保证:应用可能以比请求的延迟短的多的延迟接收事件。

- 除了采样率和最大报告延迟之外,应用还无法配置传感器参数。

- 例如,假设某个物理传感器可同时在“高精确度”和“低电耗”模式下运行。

- 这两种模式中只有一种可以在 Android 设备上使用,因为若非如此,一个应用可能请求高精确度模式,而另一个可能请求低电耗模式,而框架根本无法同时满足这两个应用的要求。框架必须始终能够满足所有客户端的需要,因此同时使用两种模式是不可行的。

- 没有将数据从应用向下发送至传感器或其驱动程序的机制。这样可以确保某个应用无法修改传感器的行为,从而不会对其他应用造成破坏。

传感器融合

Android 框架为部分复合传感器提供默认实现。如果设备上有陀螺仪、加速度计和磁力计,但没有旋转矢量传感器、重力传感器和线性加速度传感器,Android 框架会实现这些传感器,以便应用仍可以使用它们。

默认实现无法访问其他实现可以访问的所有数据,并且必须在 SoC 上运行,因此它不像其他实现那样精准和省电。设备制造商应尽可能定义自己的融合传感器(旋转矢量传感器、重力传感器和线性加速度传感器,以及游戏旋转矢量传感器等较新的复合传感器),而非依赖该默认实现。此外,设备制造商也可以要求传感器芯片供应商为其提供实现。

默认的传感器融合实现没有相关的维护,且可能导致依赖它的设备无法通过 CTS 验证。

深入了解

本部分提供了一些相关背景信息,面向的是 Android 开源项目 (AOSP) 框架代码的维护人员,与硬件制造商无关。

JNI

Android 框架会使用 frameworks/base/core/jni/ 目录中与 android.hardware 相关联的 Java 原生接口 (JNI)。该代码会调用较低级别的原生代码,以获取对相应传感器硬件的访问权限。

原生框架

原生框架在 frameworks/native/ 中定义,并提供相当于 android.hardware 软件包的原生软件包。原生框架会调用 Binder IPC 代理,以获取对传感器专属服务的访问权限。

Binder IPC

Binder IPC 代理用于促进跨越进程边界的通信。

HAL

Sensors 硬件抽象层 (HAL) API 是硬件驱动程序和 Android 框架之间的接口。它包含一个 HAL 接口 sensors.h 和一个我们称之为 sensors.cpp 的 HAL 实现。

接口由 Android 和 AOSP 贡献者定义,并由设备制造商提供实现。

传感器 HAL 接口位于 hardware/libhardware/include/hardware 中。如需了解详情,请参阅 sensors.h。

版本周期

HAL 实现通过设置 your_poll_device.common.version 指定实现的 HAL 接口版本。现有的 HAL 接口版本在 sensors.h 中有所定义,相应功能与这些版本绑定在一起。

Android 框架目前支持版本 1.0 和 1.3,不过版本 1.0 很快将不再受支持。本文档介绍了版本 1.3(所有设备均应升级到该版本)的行为。要详细了解如何升级到版本 1.3,请参阅 HAL 版本弃用。

内核驱动程序

传感器驱动程序可与物理设备进行交互。在某些情况下,HAL 实现和驱动程序是同一软件实体。在其他情况下,硬件集成者会要求传感器芯片制造商提供相应驱动程序,但是它们是用于编写 HAL 实现的驱动程序。

在所有情况下,HAL 实现和内核驱动程序都由硬件制造商负责提供,Android 不提供首选编写方式。

传感器中枢

设备的传感器堆栈可视需要添加传感器中枢。在 SoC 可以处于挂起模式时,传感器中枢对执行一些低功耗的低级计算任务非常有用。例如,计步功能或传感器融合功能可以在这些芯片上执行。此外,它也是实施传感器批处理以及为传感器事件添加硬件 FIFO 的理想位置。有关详情,请参阅批处理。

注意:如需开发采用新传感器或 LED 的新 ContextHub 功能,您还可以使用连接到 Hikey 或 Hikey960 开发板的 Neonkey 传感器中枢。

传感器中枢的具体化方式取决于架构。它有时是一个单独的芯片,有时包含在与 SoC 相同的芯片上。传感器中枢的重要特征是,应该包含足够的内存来进行批处理,并消耗极少的电量以便能实现低功耗 Android 传感器。部分传感器中枢包含一个微控制器(用于通用计算)和硬件加速器(用于针对低电耗传感器实现极低功耗计算)。

传感器中枢的架构方式以及与传感器和 SoC(I2C 总线、SPI 总线等)的通信方式并非由 Android 指定,但应该以最大程度减少整体功耗为目标。

有一种方案似乎会对实现的简单性产生重大影响,即从传感器中枢到 SoC 设置两个中断行:一个用于唤醒中断(适用于唤醒传感器),另一个用于非唤醒中断(适用于非唤醒传感器)。

传感器

传感器是进行测量操作的物理 MEM 芯片。在很多情况下,同一个芯片上会有多个物理传感器。例如,一些芯片中包含加速度计、陀螺仪和磁力计(此类芯片通常称为 9 轴芯片,因为每个传感器基于 3 个轴提供数据)。

此外,其中的部分芯片还包含用于执行常见计算任务(例如移动侦测、步数检测以及 9 轴传感器融合)的逻辑。

尽管 CDD 功率和精确度的要求与建议针对的是 Android 传感器而非物理传感器,但这些要求会影响物理传感器的选择。例如,游戏旋转矢量传感器的精确度要求会影响物理陀螺仪的精确度要求。设备制造商负责推算物理传感器的相关要求。