使用通过蓝牙低功耗 (BLE) 技术进行通信的连接导向型 L2CAP 通道 (CoC),有助于改进助听设备 (HA) 在 Android 移动设备上的无障碍功能。CoC 使用多个音频数据包的弹性缓冲区来维持稳定的音频流(即使存在数据包丢失的情况也是如此)。该缓冲区可保障助听设备的音频质量,但会产生延迟。

CoC 的设计参考了蓝牙核心规范版本 5 (BT)。为了与核心规范保持一致,本页面上的所有多字节值都应以小端字节序的形式读取。

术语

- 中央设备 - 通过蓝牙扫描通告的 Android 设备。

- 外围设备 - 通过蓝牙发送通告数据包的助听器。

网络拓扑和系统架构

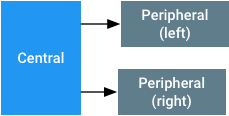

针对助听器使用 CoC 时,网络拓扑会假设存在一个中央设备和两个外围设备(一个在左侧,一个在右侧),如图 1 所示。蓝牙音频系统会将左右外围设备分别视为一个音频接收器。如果由于单耳选配或连接中断而导致某个外围设备缺失,则中央设备会混合左右声道,并将音频传输到剩余的那个外围设备。如果中央设备与这两个外围设备之间的连接均中断,则中央设备会认为指向音频接收器的链接发生中断。在这些情况下,中央设备会将音频路由到其他输出设备。

图 1.用于使用支持 BLE 的 CoC 将助听器与 Android 移动设备配对的拓扑

如果中央设备未将音频数据流式传输到外围设备,且可以保持 BLE 连接,那么中央设备应该不会与外围设备断开连接。保持连接可以与位于外围设备上的 GATT 服务器进行数据通信。

在配对和连接助听设备时,中央设备应该:

- 跟踪最近配对的左右外围设备。

- 如果存在有效配对,则假设这些外围设备正在使用中。当连接中断时,中央设备应尝试与已配对的设备建立连接或重新建立连接。

- 如果删除了配对,则假设这些外围设备已不在使用中状态。

在上述情况中,“配对”是指在操作系统中使用给定的 UUID 和左/右指示符注册一组助听器的操作,而不是指蓝牙配对流程。

系统要求

要正确实施 CoC 以获得良好的用户体验,中央设备和外围设备中的蓝牙系统应该:

- 实现兼容的 BT 4.2 或更高版本的控制器。强烈建议使用 LE 安全连接。

- 让中央设备支持至少 2 个同步 LE 链路,并采用音频数据包格式和时间设置中所述的参数。

- 让外围设备支持至少 1 个 LE 链路(包含音频数据包格式和时间设置中所述的参数)。

- 拥有基于 LE 信用的流控制 [BT 第 3 卷,A 部分,第 10.1 节]。 设备应该在 CoC 上支持至少 167 个字节的 MTU 和 MPS,并且最多能够缓冲 8 个数据包。

- 具有 LE 数据长度扩展 [BT 第 6 卷,B 部分,第 5.1.9 节],载荷至少为 167 个字节。

-

让中央设备支持 HCI LE 连接更新命令,并遵循非零

maximum_CE_Length和minimum_CE_Length参数。 - 让中央设备使用音频数据包格式和时间设置中的连接时间间隔和载荷大小,保持与两个不同外围设备之间的两个 LE CoC 连接的数据吞吐量。

-

让外围设备将

LL_LENGTH_REQ或LL_LENGTH_RSP帧中的MaxRxOctets和MaxRxTime参数设置为这些规范所需的最小必需值。这样,中央设备可以在计算接收帧所需的时间长度时优化其时间调度程序。

强烈建议让中央设备和外围设备支持 BT 5.0 规范中指定的 2MB PHY。 中央设备应同时在 1M 和 2M PHY 上支持至少 64 kbit/s 的音频链路。请勿使用 BLE 远程 PHY。

CoC 使用标准蓝牙机制实现链路层加密和跳频。

ASHA GATT 服务

外围设备应实现下文所述的 Audio Streaming for Hearing Aid (ASHA) GATT 服务器服务。外围设备应在一般可检测模式下通告此服务,以便中央设备识别音频接收器。任何 LE 音频流式传输操作都需要加密。BLE 音频流式传输包含以下特征:

| 特征 | 属性 | 说明 |

|---|---|---|

| ReadOnlyProperties | 读取 | 请参见 ReadOnlyProperties。 |

| AudioControlPoint | 写入和无响应写入 | 音频流的控制点。请参见 AudioControlPoint。 |

| AudioStatusPoint | 读取/通知 | 音频控制点的状态报告字段。请参阅 AudioStatusPoint。 |

| 音量 | 写入(无响应) | 介于 -128 和 0 之间的字节,表示应用于流式传输音频信号的衰减量,范围是 -48 dB 到 0 dB。设置 -128 应解析为完全静音,即最低非静音音量为 -127,相当于 -47.625 dB 的衰减量。设置为 0 时,在轨间流式传输的正弦音调应表示助听器上的 100 dBSPL 输入等效值。中央设备应按标称全标度进行流式传输,并使用此变量在外围设备中设置所需的表示级别。 |

| LE_PSM_OUT | 读取 | 要用于连接声道的 PSM。将从动态范围中挑选 [BT 第 3 卷,A 部分,第 4.22 节] |

分配给服务和特征的 UUID:

服务 UUID:{0xFDF0}

| 特征 | UUID |

|---|---|

| ReadOnlyProperties | {6333651e-c481-4a3e-9169-7c902aad37bb} |

| AudioControlPoint | {f0d4de7e-4a88-476c-9d9f-1937b0996cc0} |

| AudioStatus | {38663f1a-e711-4cac-b641-326b56404837} |

| 音量 | {00e4ca9e-ab14-41e4-8823-f9e70c7e91df} |

| LE_PSM_OUT | {2d410339-82b6-42aa-b34e-e2e01df8cc1a} |

除了 ASHA GATT 服务外,外围设备还应实现设备信息服务,以便中央设备检测外围设备的制造商名称和设备名称。

ReadOnlyProperties

ReadOnlyProperties 具有以下值:

| Byte | 说明 |

|---|---|

| 0 | 版本 - 必须为 0x01 |

| 1 | 请参见 DeviceCapabilities。 |

| 2-9 | 请参见 HiSyncId。 |

| 10 | 请参见 FeatureMap。 |

| 11-12 | RenderDelay。这是从外围设备接收音频帧到外围设备呈现输出所经过的时间(以毫秒为单位)。这些字节可用于延迟视频,以便与音频同步。 |

| 13-14 | 预留以供日后使用。初始化为零。 |

| 15-16 | 支持的编解码器 ID。这是受支持的编解码器 ID 的位掩码。位元位置中的 1 对应于支持的编解码器。例如,0x0002 指示支持采样率为 16 kHz 的 G.722。所有其他位均应设置为 0。 |

DeviceCapabilities

| 位 | 说明 |

|---|---|

| 0 | 设备端(0:左;1:右) |

| 1 | 指明设备是独立设备并接收单声道数据,还是属于设备集的一部分(0:单声道;1:双声道) |

| 2 | 设备支持 CSIS(0:不支持;1:支持) |

| 3-7 | 保留(设为 0) |

HiSyncID

对于所有双声道设备,此字段必须是唯一的,但对于左声道和右声道设置,此字段必须相同。

| Byte | 说明 |

|---|---|

| 0-1 | 制造商的 ID。这是 BTSIG 分配的公司标识。 |

| 2-7 | 用于识别助听器组的唯一 ID。必须在左侧和右侧外围设备上将此 ID 设置为相同的值。 |

FeatureMap

| 位 | 说明 |

|---|---|

| 0 | 是否支持 LE CoC 音频输出流式传输(是/否)。 |

| 1-7 | 已保留(设为 0)。 |

编解码器 ID

如果设置了位,则支持这个特定的编解码器。

| ID/位编号 | 编解码器和采样率 | 所需的比特率 | 帧时间 | 在中央设备 (C) 或外围设备 (P) 上必须提供 |

|---|---|---|---|---|

| 0 | 预留 | 预留 | 预留 | 预留 |

| 1 | G.722 @ 16 kHz | 64 kbit/s | 变量 | C 和 P |

|

2-15 已保留。 0 也已保留。 |

||||

AudioControlPoint

当 LE CoC 关闭时,无法使用该控制点。有关过程说明,请参阅启动和停止音频流。

| 运算码 | 参数 | GATT 子流程 | 说明 |

|---|---|---|---|

1 «Start» |

|

写入(有响应),并期待通过 AudioStatusPoint 特性收到其他状态通知。 |

指示外围设备重置编解码器并开始播放第 0 帧。编解码器字段指示要用于此次播放的编解码器 ID。

例如,对于采样率为 16k Hz 的 G.722,编解码器字段为“1”。 音频类型位字段指示数据流中存在的音频类型:

在收到 «Stop» 操作码之前,外围设备不得请求连接更新。

|

2 «Stop» |

无 | 写入(有响应),并期待通过 AudioStatusPoint 特性收到其他状态通知。 | 指示外围设备设备停止呈现音频。若要再次呈现音频,应在这次停止后启动新的音频设置序列。 |

3 «Status» |

|

写入(无响应) |

通知已连接的外围设备:其他外围设备上有状态更新。连接字段指示更新的类型:

|

AudioStatusPoint

音频控制点的状态报告字段

| 操作码 | 说明 |

|---|---|

| 0 | 状态正常 |

| -1 | 未知命令 |

| -2 | 非法参数 |

ASHA GATT 服务的通告

服务 UUID 必须位于通告数据包中。外围设备必须在通告或扫描响应帧中包含服务数据:

| 字节偏移 | 名称 | 说明 |

|---|---|---|

| 0 | 广告长度 | >= 0x09 |

| 1 | 广告类型 | 0x16(服务数据 - 16 位 UUID) |

| 2-3 | 服务 UUID |

0xFDF0(小端字节序) 注意:这是一个临时 ID。 |

| 4 | 协议版本 | 0x01 |

| 5 | 功能 |

|

| 6-9 | 截断的 HiSyncID | HiSyncId 的四个最低位字节。这些字节应该是 ID 中最具随机性的部分。 |

外围设备必须具有可指示助听器名称的完整本地名称数据类型。此名称将用在移动设备的界面中,以便用户可以选择正确的设备。此名称不应指示左/右声道,因为该信息在 DeviceCapabilities 中提供。

如果外围设备将名称和 ASHA 服务数据类型放在相同的帧类型(ADV 或 SCAN RESP)中,则这两种数据类型(“完整本地名称”和“Service Data for ASHA 服务”)应显示在同一个帧中。这可让移动设备扫描器在同一个扫描结果中获得这两项数据。

在初始配对期间,外围设备必须以足够快的速率进行通告,才能让移动设备快速发现外围设备并与它们绑定。

同步左侧和右侧外围设备

要在 Android 移动设备上使用蓝牙,外围设备应负责确保这些设备已同步。左右外围设备上的播放操作需要保持同步。两个外围设备必须同时播放相应来源的音频样本。

外围设备可以使用附加到每个音频负载数据包前面的序列号来同步它们的时间。中央设备保证打算在每个外围设备上同时播放的音频数据包具有相同的序列号。在每个音频数据包播放完毕之后,该序列号都会递增 1。每个序列号的长度都是 8 位,因此在播放完 256 个音频数据包之后,序列号将会重复。由于每次连接的每个音频数据包大小和采样率是固定的,因此两个外围设备可以推断出相对播放时间。要详细了解音频数据包,请参阅音频数据包格式和时间设置。

当需要进行同步时,中央设备会向双声道设备提供触发器来协助同步。每当出现可能影响同步的操作时,这些触发器会通知每个外围设备其配对外围设备的状态。 触发器的作用包括:

-

作为 AudioControlPoint 的

«Start»命令的一部分,提供双声道设备另一侧的当前连接状态。 -

只要某个外围设备上发生连接、断开连接或连接参数更新操作,触发器都会将 AudioControlPoint 的

«Status»命令发送至双声道设备的另一侧。.

音频数据包格式和时间设置

将音频帧(样本块)打包成数据包可让助听器从链路层定时锚点推断时间。为了简化实现:

- 音频帧应一律与连接时间间隔相匹配。 例如,如果连接时间间隔为 20 毫秒且采样率为 16 kHz,则音频帧应包含 320 个样本。

- 无论帧时间或连接时间间隔为何,系统中的采样率都限制为 8kHz 的倍数,以便帧中包含的样本个数始终为整数。

- 序列字节应该附加到音频帧前面。序列字节应按环绕式进行计数,并允许外围设备检测缓冲区不匹配或下溢的情况。

-

音频帧始终都应适合单个 LE 数据包。音频帧应作为单独的 L2CAP 数据包发送。LE LL PDU 的大小应为:

音频负载大小 + 1(序列计数器)+ 6(L2CAP 标头为 4,SDU 为 2) - 连接事件始终都应足够大,以包含 2 个音频数据包和 2 个空数据包,供 ACK 预留用于重新传输的带宽。请注意,中央设备的蓝牙控制器可能会将音频数据包分段。外围设备必须能够为每个连接事件接收 2 个以上的分段音频数据包。

为了给中央设备提供一些灵活性,未指定 G.722 数据包长度。G.722 数据包长度可以根据中央设备设置的连接时间间隔而改变。

对于 G.722 输出八位字节格式,请参考 Rec. ITU-T G.722 (09/2012) 第 1.4.4 节“Multiplexer”(多路转接器)

对于外围设备支持的所有编解码器,外围设备都应支持下面的连接参数。这里仅列出了中央设备可以实现的一部分配置。

| 编解码器 | 比特率 | 连接时间间隔 | CE 长度 (1M/2M PHY) | 音频负载大小 |

|---|---|---|---|---|

| G.722 @ 16 kHz | 64 kbit/s | 20 毫秒 | 5000/3750 us | 160 个字节 |

启动和停止音频串流

在启动音频流之前,中央设备会查询外围设备,并建立通用的编解码器。接下来,音频流设置会按顺序进行以下操作:

- 读取 PSM 和 RenderDelay(可选)。中央设备可能会缓存这些值。

- 打开 CoC L2CAP 通道 - 外围设备最初应授予 8 个 Credit。

- 发出连接更新命令以将链接切换到所选编解码器需要的参数。中央设备可以在上一步骤中的 CoCo 连接之前进行此连接更新。

- 中央设备和外围设备主机等待更新完成事件。

-

重启音频编码器,并将数据包序列计数重置为 0。

在 AudioControlPoint 上发出带有相关参数的

«Start»命令。中央设备等待外围设备发出关于先前«Start»命令的成功状态通知,然后执行流式传输。 在等待过程中,外围设备可以准备其音频播放管道。在音频流式传输期间,即使当前副本延迟可能为非零值,副本也应在每个连接事件中都可用。 - 外围设备从其内部队列获取第一个音频数据包(序列号为 0),并播放该音频数据包。

中央设备会发出 «Stop» 命令来关闭音频流。发出此命令后,外围设备不需要在每个连接事件上都可用。要重新启动音频流式传输,请从第 5 步开始执行上述步骤。如果中央设备未在流式传输音频,那么它仍然应该为 GATT 服务保持 LE 连接。

外围设备不得向中央设备发出连接更新命令。 为了节省功耗,中央设备可以在未流式传输音频时向外围设备发出连接更新命令。