Android Automotive OS (AAOS) 是在核心 Android 音频堆栈的基础之上打造而成,以支持用作车辆信息娱乐系统的用例。AAOS 负责实现信息娱乐声音(即媒体、导航和通讯声音),但不直接负责具有严格可用性和时间要求的铃声和警告。

虽然 AAOS 提供了信号和机制来帮助车辆管理音频,但最终还是由车辆来决定应为驾驶员和乘客播放什么声音,从而确保对保障安全至关重要的声音和监管声音能被确切听到,而不会中断。

由于 AAOS 利用了 Android 音频堆栈,因此播放音频的第三方应用不需要执行与手机中不同的操作。应用的音频路由由 AAOS 自动管理,如音频政策配置中所述。

当 Android 管理车辆的媒体体验时,应通过应用来代表外部媒体来源(例如电台调谐器),这类应用可以处理该来源的音频焦点和媒体键事件。

Android 声音和声音流

汽车音频系统可以处理以下声音和声音流:

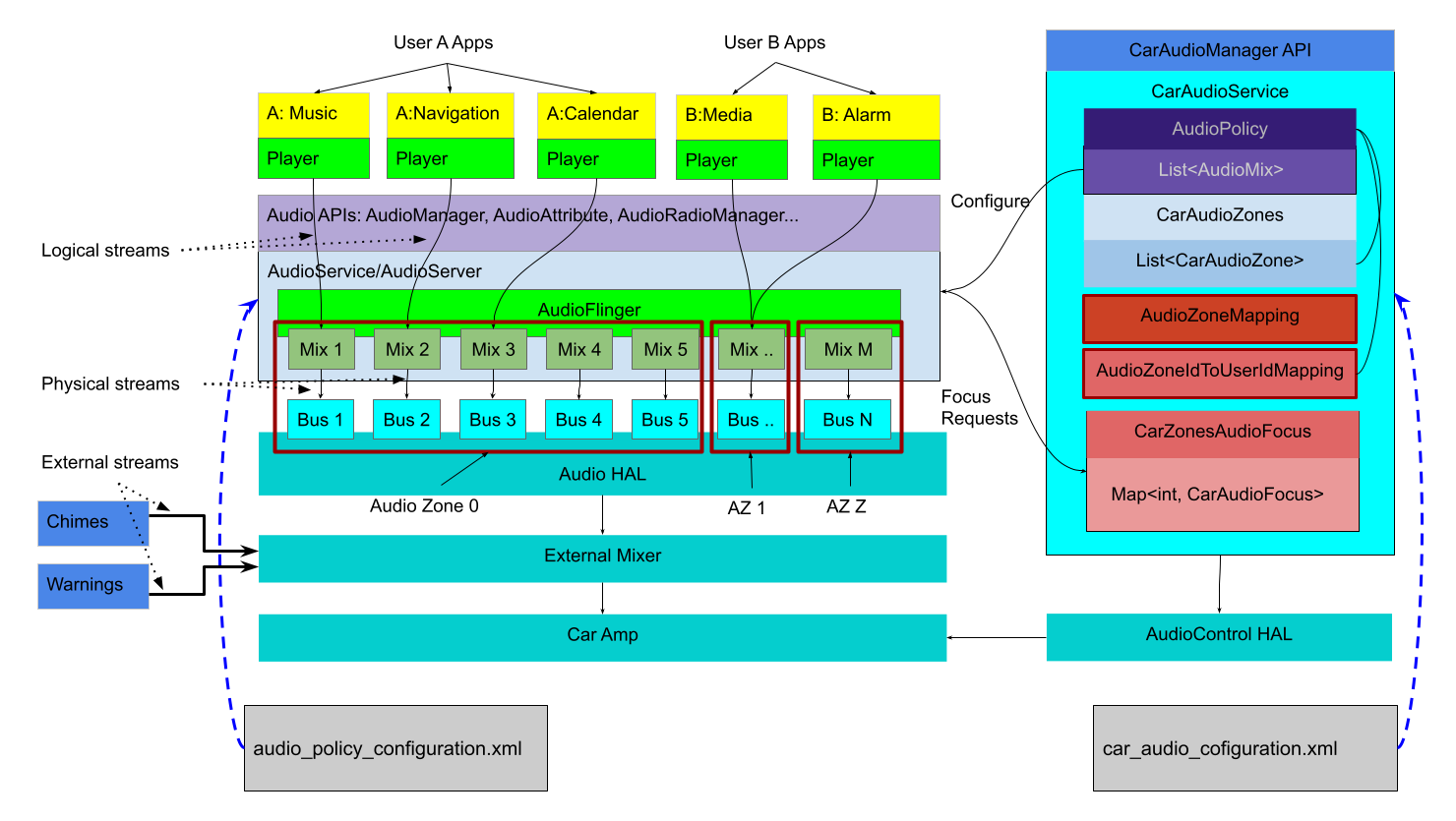

图 1. 以声音流为中心的架构图。

Android 管理来自 Android 应用的声音,同时控制这些应用,并根据其声音类型将声音路由到 HAL 中的输出设备:

逻辑声音流:在核心音频命名法中称为“声源”,使用音频属性进行标记。

物理声音流:在核心音频命名法中称为“设备”,在混音后没有上下文信息。

为了确保可靠性,外部声音(来自独立声源,例如安全带警告铃声)在 Android 外部(HAL 下方,甚至是在单独的硬件中)进行管理。系统实现者必须提供一个混音器,用于接受来自 Android 的一个或多个声音输入流,然后以合适的方式将这些声音流与车辆所需的外部声源组合起来。Android 控件 HAL 为在 Android 外部生成的声音提供了一种不同的机制来与 Android 通信:

- 音频焦点请求

- 增益或音量限制

- 增益和音量变化

音频 HAL 实现和外部混音器负责确保对保障安全至关重要的外部声音能够被用户听到,而且负责在 Android 提供的声音流中进行混音,并将混音结果路由到合适的音响设备。

Android 声音

应用可以有一个或多个通过标准 Android API(如用于控制焦点的 AudioManager 或用于在线播放的 MediaPlayer)交互的播放器,以便发出一个或多个音频数据逻辑声音流。这些数据可能是单声道声音,也可能是 7.1 环绕声,但都会作为单个声源进行路由和处理。应用声音流与 AudioAttributes(可向系统提供有关应如何表达音频的提示)相关联。

逻辑声音流通过 AudioService 发送,并路由到一个(并且只有一个)可用的物理输出声音流,其中每个声音流都是混音器在 AudioFlinger 内的输出。音频属性在混合到物理声音流后将不再可用。

接下来,每个物理声音流都会传输到音频 HAL,以在硬件上呈现。在汽车应用中,呈现硬件可能是本地编解码器(类似于移动设备),也可能是车辆物理网络中的远程处理器。无论是哪种情况,音频 HAL 实现都需要提供实际样本数据并使其能被用户听见。

外部声音流

如果声音流因认证或计时原因而不应经由 Android,则可以直接发送到外部混音器。从 Android 11 开始,HAL 现在能够针对这些外部声音请求焦点,以通知 Android,使其能够采取适当措施(例如暂停媒体或阻止其他应用获得焦点)。

如果外部声音流是应与 Android 正在生成的声音环境交互的媒体源(例如,当外部调谐器处于开启状态时,停止 MP3 播放),则那些外部声音流应由 Android 应用表示。此类应用将代表媒体来源(而非 HAL)请求音频焦点,并根据需要通过启动/停止外部声音源来响应焦点通知,以符合 Android 音频焦点政策规定。

应用还负责处理媒体键事件,例如播放和暂停。如需控制此类外部设备,建议使用的一种机制是 HwAudioSource。如需了解详情,请参阅在 AAOS 中连接输入设备。

输出设备

在音频 HAL 级别,设备类型 AUDIO_DEVICE_OUT_BUS 提供用于车载音频系统的通用输出设备。总线设备支持可寻址端口(其中每个端口都是一个物理声音流的端点),并且应该是车辆内唯一受支持的输出设备类型。

系统实现可以针对所有 Android 声音使用一个总线端口,在这种情况下,Android 会将所有声音混合在一起,并将混音结果作为一个声音流进行传输。此外,HAL 可以分别为每个 CarAudioContext 提供一个总线端口,以允许并发传输任何声音类型。这样一来,HAL 实现就可以根据需要混合和闪避不同的声音。

音频上下文到输出设备的分配是通过 car_audio_configuration.xml 文件完成的。如需了解详情,请参阅音频政策配置。